Compare commits

No commits in common. "master" and "staging" have entirely different histories.

6

.bazelrc

6

.bazelrc

|

|

@ -87,9 +87,6 @@ build:ios_fat --config=ios

|

|||

build:ios_fat --ios_multi_cpus=armv7,arm64

|

||||

build:ios_fat --watchos_cpus=armv7k

|

||||

|

||||

build:ios_sim_fat --config=ios

|

||||

build:ios_sim_fat --ios_multi_cpus=x86_64,sim_arm64

|

||||

|

||||

build:darwin_x86_64 --apple_platform_type=macos

|

||||

build:darwin_x86_64 --macos_minimum_os=10.12

|

||||

build:darwin_x86_64 --cpu=darwin_x86_64

|

||||

|

|

@ -98,9 +95,6 @@ build:darwin_arm64 --apple_platform_type=macos

|

|||

build:darwin_arm64 --macos_minimum_os=10.16

|

||||

build:darwin_arm64 --cpu=darwin_arm64

|

||||

|

||||

# Turn off maximum stdout size

|

||||

build --experimental_ui_max_stdouterr_bytes=-1

|

||||

|

||||

# This bazelrc file is meant to be written by a setup script.

|

||||

try-import %workspace%/.configure.bazelrc

|

||||

|

||||

|

|

|

|||

|

|

@ -1 +1 @@

|

|||

6.1.1

|

||||

5.2.0

|

||||

|

|

|

|||

27

.github/ISSUE_TEMPLATE/00-build-installation-issue.md

vendored

Normal file

27

.github/ISSUE_TEMPLATE/00-build-installation-issue.md

vendored

Normal file

|

|

@ -0,0 +1,27 @@

|

|||

---

|

||||

name: "Build/Installation Issue"

|

||||

about: Use this template for build/installation issues

|

||||

labels: type:build/install

|

||||

|

||||

---

|

||||

<em>Please make sure that this is a build/installation issue and also refer to the [troubleshooting](https://google.github.io/mediapipe/getting_started/troubleshooting.html) documentation before raising any issues.</em>

|

||||

|

||||

**System information** (Please provide as much relevant information as possible)

|

||||

- OS Platform and Distribution (e.g. Linux Ubuntu 16.04, Android 11, iOS 14.4):

|

||||

- Compiler version (e.g. gcc/g++ 8 /Apple clang version 12.0.0):

|

||||

- Programming Language and version ( e.g. C++ 14, Python 3.6, Java ):

|

||||

- Installed using virtualenv? pip? Conda? (if python):

|

||||

- [MediaPipe version](https://github.com/google/mediapipe/releases):

|

||||

- Bazel version:

|

||||

- XCode and Tulsi versions (if iOS):

|

||||

- Android SDK and NDK versions (if android):

|

||||

- Android [AAR](https://google.github.io/mediapipe/getting_started/android_archive_library.html) ( if android):

|

||||

- OpenCV version (if running on desktop):

|

||||

|

||||

**Describe the problem**:

|

||||

|

||||

|

||||

**[Provide the exact sequence of commands / steps that you executed before running into the problem](https://google.github.io/mediapipe/getting_started/getting_started.html):**

|

||||

|

||||

**Complete Logs:**

|

||||

Include Complete Log information or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached:

|

||||

|

|

@ -1,70 +0,0 @@

|

|||

name: Task Issue

|

||||

description: Use this template for assistance with using MediaPipe Tasks (developers.google.com/mediapipe/solutions) to deploy on-device ML solutions (e.g. gesture recognition etc.) on supported platforms

|

||||

labels: 'type:task'

|

||||

body:

|

||||

- type: markdown

|

||||

id: linkmodel

|

||||

attributes:

|

||||

value: Please make sure that this is a [Tasks](https://developers.google.com/mediapipe/solutions) issue.

|

||||

- type: dropdown

|

||||

id: customcode_model

|

||||

attributes:

|

||||

label: Have I written custom code (as opposed to using a stock example script provided in MediaPipe)

|

||||

options:

|

||||

- 'Yes'

|

||||

- 'No'

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: os_model

|

||||

attributes:

|

||||

label: OS Platform and Distribution

|

||||

placeholder: e.g. Linux Ubuntu 16.04, Android 11, iOS 14.4

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: task-sdk-version

|

||||

attributes:

|

||||

label: MediaPipe Tasks SDK version

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: taskname

|

||||

attributes:

|

||||

label: Task name (e.g. Image classification, Gesture recognition etc.)

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: programminglang

|

||||

attributes:

|

||||

label: Programming Language and version (e.g. C++, Python, Java)

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: current_model

|

||||

attributes:

|

||||

label: Describe the actual behavior

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: expected_model

|

||||

attributes:

|

||||

label: Describe the expected behaviour

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: what-happened_model

|

||||

attributes:

|

||||

label: Standalone code/steps you may have used to try to get what you need

|

||||

description: If there is a problem, provide a reproducible test case that is the bare minimum necessary to generate the problem. If possible, please share a link to Colab, GitHub repo link or anything that we can use to reproduce the problem

|

||||

render: shell

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: other_info

|

||||

attributes:

|

||||

label: Other info / Complete Logs

|

||||

description: Include any logs or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached

|

||||

render: shell

|

||||

validations:

|

||||

required: false

|

||||

26

.github/ISSUE_TEMPLATE/10-solution-issue.md

vendored

Normal file

26

.github/ISSUE_TEMPLATE/10-solution-issue.md

vendored

Normal file

|

|

@ -0,0 +1,26 @@

|

|||

---

|

||||

name: "Solution Issue"

|

||||

about: Use this template for assistance with a specific mediapipe solution, such as "Pose" or "Iris", including inference model usage/training, solution-specific calculators, etc.

|

||||

labels: type:support

|

||||

|

||||

---

|

||||

<em>Please make sure that this is a [solution](https://google.github.io/mediapipe/solutions/solutions.html) issue.<em>

|

||||

|

||||

**System information** (Please provide as much relevant information as possible)

|

||||

- Have I written custom code (as opposed to using a stock example script provided in Mediapipe):

|

||||

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04, Android 11, iOS 14.4):

|

||||

- [MediaPipe version](https://github.com/google/mediapipe/releases):

|

||||

- Bazel version:

|

||||

- Solution (e.g. FaceMesh, Pose, Holistic):

|

||||

- Programming Language and version ( e.g. C++, Python, Java):

|

||||

|

||||

**Describe the expected behavior:**

|

||||

|

||||

**Standalone code you may have used to try to get what you need :**

|

||||

|

||||

If there is a problem, provide a reproducible test case that is the bare minimum necessary to generate the problem. If possible, please share a link to Colab/repo link /any notebook:

|

||||

|

||||

**Other info / Complete Logs :**

|

||||

Include any logs or source code that would be helpful to

|

||||

diagnose the problem. If including tracebacks, please include the full

|

||||

traceback. Large logs and files should be attached:

|

||||

|

|

@ -1,71 +0,0 @@

|

|||

name: Model Maker Issues

|

||||

description: Use this template for assistance with using MediaPipe Model Maker (developers.google.com/mediapipe/solutions) to create custom on-device ML solutions.

|

||||

labels: 'type:modelmaker'

|

||||

body:

|

||||

- type: markdown

|

||||

id: linkmodel

|

||||

attributes:

|

||||

value: Please make sure that this is a [Model Maker](https://developers.google.com/mediapipe/solutions) issue

|

||||

- type: dropdown

|

||||

id: customcode_model

|

||||

attributes:

|

||||

label: Have I written custom code (as opposed to using a stock example script provided in MediaPipe)

|

||||

options:

|

||||

- 'Yes'

|

||||

- 'No'

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: os_model

|

||||

attributes:

|

||||

label: OS Platform and Distribution

|

||||

placeholder: e.g. Linux Ubuntu 16.04, Android 11, iOS 14.4

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: pythonver

|

||||

attributes:

|

||||

label: Python Version

|

||||

placeholder: e.g. 3.7, 3.8

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: modelmakerver

|

||||

attributes:

|

||||

label: MediaPipe Model Maker version

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: taskname

|

||||

attributes:

|

||||

label: Task name (e.g. Image classification, Gesture recognition etc.)

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: current_model

|

||||

attributes:

|

||||

label: Describe the actual behavior

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: expected_model

|

||||

attributes:

|

||||

label: Describe the expected behaviour

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: what-happened_model

|

||||

attributes:

|

||||

label: Standalone code/steps you may have used to try to get what you need

|

||||

description: If there is a problem, provide a reproducible test case that is the bare minimum necessary to generate the problem. If possible, please share a link to Colab, GitHub repo link or anything that we can use to reproduce the problem

|

||||

render: shell

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: other_info

|

||||

attributes:

|

||||

label: Other info / Complete Logs

|

||||

description: Include any logs or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached

|

||||

render: shell

|

||||

validations:

|

||||

required: false

|

||||

|

|

@ -1,61 +0,0 @@

|

|||

name: Studio Issues

|

||||

description: Use this template for assistance with the MediaPipe Studio application. If this doesn’t look right, choose a different type.

|

||||

labels: 'type:support'

|

||||

body:

|

||||

- type: markdown

|

||||

id: linkmodel

|

||||

attributes:

|

||||

value: Please make sure that this is a MediaPipe Studio issue.

|

||||

- type: input

|

||||

id: os_model

|

||||

attributes:

|

||||

label: OS Platform and Distribution

|

||||

placeholder: e.g. Linux Ubuntu 16.04, Android 11, iOS 14.4

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: browserver

|

||||

attributes:

|

||||

label: Browser and Version

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: hardware

|

||||

attributes:

|

||||

label: Any microphone or camera hardware

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: url

|

||||

attributes:

|

||||

label: URL that shows the problem

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: current_model

|

||||

attributes:

|

||||

label: Describe the actual behavior

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: expected_model

|

||||

attributes:

|

||||

label: Describe the expected behaviour

|

||||

validations:

|

||||

required: false

|

||||

- type: textarea

|

||||

id: what-happened_model

|

||||

attributes:

|

||||

label: Standalone code/steps you may have used to try to get what you need

|

||||

description: If there is a problem, provide a reproducible test case that is the bare minimum necessary to generate the problem. If possible, please share a link to Colab, GitHub repo link or anything that we can use to reproduce the problem

|

||||

render: shell

|

||||

validations:

|

||||

required: false

|

||||

- type: textarea

|

||||

id: other_info

|

||||

attributes:

|

||||

label: Other info / Complete Logs

|

||||

description: Include any logs or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached

|

||||

render: shell

|

||||

validations:

|

||||

required: false

|

||||

|

|

@ -1,60 +0,0 @@

|

|||

name: Feature Request Issues

|

||||

description: Use this template for raising a feature request. If this doesn’t look right, choose a different type.

|

||||

labels: 'type:feature'

|

||||

body:

|

||||

- type: markdown

|

||||

id: linkmodel

|

||||

attributes:

|

||||

value: Please make sure that this is a feature request.

|

||||

- type: input

|

||||

id: solution

|

||||

attributes:

|

||||

label: MediaPipe Solution (you are using)

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: pgmlang

|

||||

attributes:

|

||||

label: Programming language

|

||||

placeholder: C++/typescript/Python/Objective C/Android Java

|

||||

validations:

|

||||

required: false

|

||||

- type: dropdown

|

||||

id: willingcon

|

||||

attributes:

|

||||

label: Are you willing to contribute it

|

||||

options:

|

||||

- 'Yes'

|

||||

- 'No'

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: behaviour

|

||||

attributes:

|

||||

label: Describe the feature and the current behaviour/state

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: api_change

|

||||

attributes:

|

||||

label: Will this change the current API? How?

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: benifit

|

||||

attributes:

|

||||

label: Who will benefit with this feature?

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: use_case

|

||||

attributes:

|

||||

label: Please specify the use cases for this feature

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: info_other

|

||||

attributes:

|

||||

label: Any Other info

|

||||

validations:

|

||||

required: false

|

||||

|

|

@ -1,108 +0,0 @@

|

|||

name: Build/Install Issue

|

||||

description: Use this template to report build/install issue

|

||||

labels: 'type:build/install'

|

||||

body:

|

||||

- type: markdown

|

||||

id: link

|

||||

attributes:

|

||||

value: Please make sure that this is a build/installation issue and also refer to the [troubleshooting](https://google.github.io/mediapipe/getting_started/troubleshooting.html) documentation before raising any issues.

|

||||

- type: input

|

||||

id: os

|

||||

attributes:

|

||||

label: OS Platform and Distribution

|

||||

description:

|

||||

placeholder: e.g. Linux Ubuntu 16.04, Android 11, iOS 14.4

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: compilerversion

|

||||

attributes:

|

||||

label: Compiler version

|

||||

description:

|

||||

placeholder: e.g. gcc/g++ 8 /Apple clang version 12.0.0

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: programminglang

|

||||

attributes:

|

||||

label: Programming Language and version

|

||||

description:

|

||||

placeholder: e.g. C++ 14, Python 3.6, Java

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: virtualenv

|

||||

attributes:

|

||||

label: Installed using virtualenv? pip? Conda?(if python)

|

||||

description:

|

||||

placeholder:

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: mediapipever

|

||||

attributes:

|

||||

label: MediaPipe version

|

||||

description:

|

||||

placeholder: e.g. 0.8.11, 0.9.1

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: bazelver

|

||||

attributes:

|

||||

label: Bazel version

|

||||

description:

|

||||

placeholder: e.g. 5.0, 5.1

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: xcodeversion

|

||||

attributes:

|

||||

label: XCode and Tulsi versions (if iOS)

|

||||

description:

|

||||

placeholder:

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: sdkndkversion

|

||||

attributes:

|

||||

label: Android SDK and NDK versions (if android)

|

||||

description:

|

||||

placeholder:

|

||||

validations:

|

||||

required: false

|

||||

- type: dropdown

|

||||

id: androidaar

|

||||

attributes:

|

||||

label: Android AAR (if android)

|

||||

options:

|

||||

- 'Yes'

|

||||

- 'No'

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: opencvversion

|

||||

attributes:

|

||||

label: OpenCV version (if running on desktop)

|

||||

description:

|

||||

placeholder:

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: what-happened

|

||||

attributes:

|

||||

label: Describe the problem

|

||||

description: Provide the exact sequence of commands / steps that you executed before running into the [problem](https://google.github.io/mediapipe/getting_started/getting_started.html)

|

||||

placeholder: Tell us what you see!

|

||||

value: "A bug happened!"

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: code-to-reproduce

|

||||

attributes:

|

||||

label: Complete Logs

|

||||

description: Include Complete Log information or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached

|

||||

placeholder: Tell us what you see!

|

||||

value:

|

||||

render: shell

|

||||

validations:

|

||||

required: true

|

||||

110

.github/ISSUE_TEMPLATE/16-bug-issue-template.yaml

vendored

110

.github/ISSUE_TEMPLATE/16-bug-issue-template.yaml

vendored

|

|

@ -1,110 +0,0 @@

|

|||

name: Bug Issues

|

||||

description: Use this template for reporting a bug. If this doesn’t look right, choose a different type.

|

||||

labels: 'type:bug'

|

||||

body:

|

||||

- type: markdown

|

||||

id: link

|

||||

attributes:

|

||||

value: Please make sure that this is a bug and also refer to the [troubleshooting](https://google.github.io/mediapipe/getting_started/troubleshooting.html), FAQ documentation before raising any issues.

|

||||

- type: dropdown

|

||||

id: customcode_model

|

||||

attributes:

|

||||

label: Have I written custom code (as opposed to using a stock example script provided in MediaPipe)

|

||||

options:

|

||||

- 'Yes'

|

||||

- 'No'

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: os

|

||||

attributes:

|

||||

label: OS Platform and Distribution

|

||||

description:

|

||||

placeholder: e.g. Linux Ubuntu 16.04, Android 11, iOS 14.4

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: mobile_device

|

||||

attributes:

|

||||

label: Mobile device if the issue happens on mobile device

|

||||

description:

|

||||

placeholder: e.g. iPhone 8, Pixel 2, Samsung Galaxy

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: browser_version

|

||||

attributes:

|

||||

label: Browser and version if the issue happens on browser

|

||||

placeholder: e.g. Google Chrome 109.0.5414.119, Safari 16.3

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: programminglang

|

||||

attributes:

|

||||

label: Programming Language and version

|

||||

placeholder: e.g. C++, Python, Java

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: mediapipever

|

||||

attributes:

|

||||

label: MediaPipe version

|

||||

description:

|

||||

placeholder: e.g. 0.8.11, 0.9.1

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: bazelver

|

||||

attributes:

|

||||

label: Bazel version

|

||||

description:

|

||||

placeholder: e.g. 5.0, 5.1

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: solution

|

||||

attributes:

|

||||

label: Solution

|

||||

placeholder: e.g. FaceMesh, Pose, Holistic

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: sdkndkversion

|

||||

attributes:

|

||||

label: Android Studio, NDK, SDK versions (if issue is related to building in Android environment)

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: xcode_ver

|

||||

attributes:

|

||||

label: Xcode & Tulsi version (if issue is related to building for iOS)

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: current_model

|

||||

attributes:

|

||||

label: Describe the actual behavior

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: expected_model

|

||||

attributes:

|

||||

label: Describe the expected behaviour

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: what-happened_model

|

||||

attributes:

|

||||

label: Standalone code/steps you may have used to try to get what you need

|

||||

description: If there is a problem, provide a reproducible test case that is the bare minimum necessary to generate the problem. If possible, please share a link to Colab, GitHub repo link or anything that we can use to reproduce the problem

|

||||

render: shell

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: other_info

|

||||

attributes:

|

||||

label: Other info / Complete Logs

|

||||

description: Include any logs or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached

|

||||

render: shell

|

||||

validations:

|

||||

required: false

|

||||

|

|

@ -1,73 +0,0 @@

|

|||

name: Documentation issue

|

||||

description: Use this template for documentation related issues. If this doesn’t look right, choose a different type.

|

||||

labels: 'type:doc-bug'

|

||||

body:

|

||||

- type: markdown

|

||||

id: link

|

||||

attributes:

|

||||

value: Thank you for submitting a MediaPipe documentation issue. The MediaPipe docs are open source! To get involved, read the documentation Contributor Guide

|

||||

- type: markdown

|

||||

id: url

|

||||

attributes:

|

||||

value: URL(s) with the issue Please provide a link to the documentation entry, for example https://github.com/google/mediapipe/blob/master/docs/solutions/face_mesh.md#models

|

||||

- type: input

|

||||

id: description

|

||||

attributes:

|

||||

label: Description of issue (what needs changing)

|

||||

description: Kinds of documentation problems

|

||||

- type: input

|

||||

id: clear_desc

|

||||

attributes:

|

||||

label: Clear description

|

||||

description: For example, why should someone use this method? How is it useful?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: link

|

||||

attributes:

|

||||

label: Correct links

|

||||

description: Is the link to the source code correct?

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: parameter

|

||||

attributes:

|

||||

label: Parameters defined

|

||||

description: Are all parameters defined and formatted correctly?

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: returns

|

||||

attributes:

|

||||

label: Returns defined

|

||||

description: Are return values defined?

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: raises

|

||||

attributes:

|

||||

label: Raises listed and defined

|

||||

description: Are the errors defined? For example,

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: usage

|

||||

attributes:

|

||||

label: Usage example

|

||||

description: Is there a usage example? See the API guide-on how to write testable usage examples.

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: visual

|

||||

attributes:

|

||||

label: Request visuals, if applicable

|

||||

description: Are there currently visuals? If not, will it clarify the content?

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: pull

|

||||

attributes:

|

||||

label: Submit a pull request?

|

||||

description: Are you planning to also submit a pull request to fix the issue? See the [docs](https://github.com/google/mediapipe/blob/master/CONTRIBUTING.md)

|

||||

validations:

|

||||

required: false

|

||||

|

|

@ -1,78 +0,0 @@

|

|||

name: Solution(Legacy) Issue

|

||||

description: Use this template for assistance with a specific Mediapipe solution (google.github.io/mediapipe/solutions) such as "Pose", including inference model usage/training, solution-specific calculators etc.

|

||||

labels: 'type:support'

|

||||

body:

|

||||

- type: markdown

|

||||

id: linkmodel

|

||||

attributes:

|

||||

value: Please make sure that this is a [solution](https://google.github.io/mediapipe/solutions/solutions.html) issue.

|

||||

- type: dropdown

|

||||

id: customcode_model

|

||||

attributes:

|

||||

label: Have I written custom code (as opposed to using a stock example script provided in MediaPipe)

|

||||

options:

|

||||

- 'Yes'

|

||||

- 'No'

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: os_model

|

||||

attributes:

|

||||

label: OS Platform and Distribution

|

||||

placeholder: e.g. Linux Ubuntu 16.04, Android 11, iOS 14.4

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: mediapipe_version

|

||||

attributes:

|

||||

label: MediaPipe version

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: bazel_version

|

||||

attributes:

|

||||

label: Bazel version

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: solution

|

||||

attributes:

|

||||

label: Solution

|

||||

placeholder: e.g. FaceMesh, Pose, Holistic

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: programminglang

|

||||

attributes:

|

||||

label: Programming Language and version

|

||||

placeholder: e.g. C++, Python, Java

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: current_model

|

||||

attributes:

|

||||

label: Describe the actual behavior

|

||||

validations:

|

||||

required: false

|

||||

- type: input

|

||||

id: expected_model

|

||||

attributes:

|

||||

label: Describe the expected behaviour

|

||||

validations:

|

||||

required: false

|

||||

- type: textarea

|

||||

id: what-happened_model

|

||||

attributes:

|

||||

label: Standalone code/steps you may have used to try to get what you need

|

||||

description: If there is a problem, provide a reproducible test case that is the bare minimum necessary to generate the problem. If possible, please share a link to Colab, GitHub repo link or anything that we can use to reproduce the problem

|

||||

render: shell

|

||||

validations:

|

||||

required: false

|

||||

- type: textarea

|

||||

id: other_info

|

||||

attributes:

|

||||

label: Other info / Complete Logs

|

||||

description: Include any logs or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached

|

||||

render: shell

|

||||

validations:

|

||||

required: false

|

||||

51

.github/ISSUE_TEMPLATE/20-documentation-issue.md

vendored

Normal file

51

.github/ISSUE_TEMPLATE/20-documentation-issue.md

vendored

Normal file

|

|

@ -0,0 +1,51 @@

|

|||

---

|

||||

name: "Documentation Issue"

|

||||

about: Use this template for documentation related issues

|

||||

labels: type:docs

|

||||

|

||||

---

|

||||

Thank you for submitting a MediaPipe documentation issue.

|

||||

The MediaPipe docs are open source! To get involved, read the documentation Contributor Guide

|

||||

## URL(s) with the issue:

|

||||

|

||||

Please provide a link to the documentation entry, for example: https://github.com/google/mediapipe/blob/master/docs/solutions/face_mesh.md#models

|

||||

|

||||

## Description of issue (what needs changing):

|

||||

|

||||

Kinds of documentation problems:

|

||||

|

||||

### Clear description

|

||||

|

||||

For example, why should someone use this method? How is it useful?

|

||||

|

||||

### Correct links

|

||||

|

||||

Is the link to the source code correct?

|

||||

|

||||

### Parameters defined

|

||||

Are all parameters defined and formatted correctly?

|

||||

|

||||

### Returns defined

|

||||

|

||||

Are return values defined?

|

||||

|

||||

### Raises listed and defined

|

||||

|

||||

Are the errors defined? For example,

|

||||

|

||||

### Usage example

|

||||

|

||||

Is there a usage example?

|

||||

|

||||

See the API guide:

|

||||

on how to write testable usage examples.

|

||||

|

||||

### Request visuals, if applicable

|

||||

|

||||

Are there currently visuals? If not, will it clarify the content?

|

||||

|

||||

### Submit a pull request?

|

||||

|

||||

Are you planning to also submit a pull request to fix the issue? See the docs

|

||||

https://github.com/google/mediapipe/blob/master/CONTRIBUTING.md

|

||||

|

||||

32

.github/ISSUE_TEMPLATE/30-bug-issue.md

vendored

Normal file

32

.github/ISSUE_TEMPLATE/30-bug-issue.md

vendored

Normal file

|

|

@ -0,0 +1,32 @@

|

|||

---

|

||||

name: "Bug Issue"

|

||||

about: Use this template for reporting a bug

|

||||

labels: type:bug

|

||||

|

||||

---

|

||||

<em>Please make sure that this is a bug and also refer to the [troubleshooting](https://google.github.io/mediapipe/getting_started/troubleshooting.html), FAQ documentation before raising any issues.</em>

|

||||

|

||||

**System information** (Please provide as much relevant information as possible)

|

||||

|

||||

- Have I written custom code (as opposed to using a stock example script provided in MediaPipe):

|

||||

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04, Android 11, iOS 14.4):

|

||||

- Mobile device (e.g. iPhone 8, Pixel 2, Samsung Galaxy) if the issue happens on mobile device:

|

||||

- Browser and version (e.g. Google Chrome, Safari) if the issue happens on browser:

|

||||

- Programming Language and version ( e.g. C++, Python, Java):

|

||||

- [MediaPipe version](https://github.com/google/mediapipe/releases):

|

||||

- Bazel version (if compiling from source):

|

||||

- Solution ( e.g. FaceMesh, Pose, Holistic ):

|

||||

- Android Studio, NDK, SDK versions (if issue is related to building in Android environment):

|

||||

- Xcode & Tulsi version (if issue is related to building for iOS):

|

||||

|

||||

**Describe the current behavior:**

|

||||

|

||||

**Describe the expected behavior:**

|

||||

|

||||

**Standalone code to reproduce the issue:**

|

||||

Provide a reproducible test case that is the bare minimum necessary to replicate the problem. If possible, please share a link to Colab/repo link /any notebook:

|

||||

|

||||

**Other info / Complete Logs :**

|

||||

Include any logs or source code that would be helpful to

|

||||

diagnose the problem. If including tracebacks, please include the full

|

||||

traceback. Large logs and files should be attached

|

||||

24

.github/ISSUE_TEMPLATE/40-feature-request.md

vendored

Normal file

24

.github/ISSUE_TEMPLATE/40-feature-request.md

vendored

Normal file

|

|

@ -0,0 +1,24 @@

|

|||

---

|

||||

name: "Feature Request"

|

||||

about: Use this template for raising a feature request

|

||||

labels: type:feature

|

||||

|

||||

---

|

||||

<em>Please make sure that this is a feature request.</em>

|

||||

|

||||

**System information** (Please provide as much relevant information as possible)

|

||||

|

||||

- MediaPipe Solution (you are using):

|

||||

- Programming language : C++/typescript/Python/Objective C/Android Java

|

||||

- Are you willing to contribute it (Yes/No):

|

||||

|

||||

|

||||

**Describe the feature and the current behavior/state:**

|

||||

|

||||

**Will this change the current api? How?**

|

||||

|

||||

**Who will benefit with this feature?**

|

||||

|

||||

**Please specify the use cases for this feature:**

|

||||

|

||||

**Any Other info:**

|

||||

3

.github/bot_config.yml

vendored

3

.github/bot_config.yml

vendored

|

|

@ -15,5 +15,4 @@

|

|||

|

||||

# A list of assignees

|

||||

assignees:

|

||||

- kuaashish

|

||||

- ayushgdev

|

||||

- sureshdagooglecom

|

||||

|

|

|

|||

34

.github/stale.yml

vendored

Normal file

34

.github/stale.yml

vendored

Normal file

|

|

@ -0,0 +1,34 @@

|

|||

# Copyright 2021 The MediaPipe Authors.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

# ============================================================================

|

||||

#

|

||||

# This file was assembled from multiple pieces, whose use is documented

|

||||

# throughout. Please refer to the TensorFlow dockerfiles documentation

|

||||

# for more information.

|

||||

|

||||

# Number of days of inactivity before an Issue or Pull Request becomes stale

|

||||

daysUntilStale: 7

|

||||

# Number of days of inactivity before a stale Issue or Pull Request is closed

|

||||

daysUntilClose: 7

|

||||

# Only issues or pull requests with all of these labels are checked if stale. Defaults to `[]` (disabled)

|

||||

onlyLabels:

|

||||

- stat:awaiting response

|

||||

# Comment to post when marking as stale. Set to `false` to disable

|

||||

markComment: >

|

||||

This issue has been automatically marked as stale because it has not had

|

||||

recent activity. It will be closed if no further activity occurs. Thank you.

|

||||

# Comment to post when removing the stale label. Set to `false` to disable

|

||||

unmarkComment: false

|

||||

closeComment: >

|

||||

Closing as stale. Please reopen if you'd like to work on this further.

|

||||

68

.github/workflows/stale.yaml

vendored

68

.github/workflows/stale.yaml

vendored

|

|

@ -1,68 +0,0 @@

|

|||

# Copyright 2023 The TensorFlow Authors. All Rights Reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

# ==============================================================================

|

||||

|

||||

# This workflow alerts and then closes the stale issues/PRs after specific time

|

||||

# You can adjust the behavior by modifying this file.

|

||||

# For more information, see:

|

||||

# https://github.com/actions/stale

|

||||

|

||||

name: 'Close stale issues and PRs'

|

||||

"on":

|

||||

schedule:

|

||||

- cron: "30 1 * * *"

|

||||

permissions:

|

||||

contents: read

|

||||

issues: write

|

||||

pull-requests: write

|

||||

jobs:

|

||||

stale:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: 'actions/stale@v7'

|

||||

with:

|

||||

# Comma separated list of labels that can be assigned to issues to exclude them from being marked as stale.

|

||||

exempt-issue-labels: 'override-stale'

|

||||

# Comma separated list of labels that can be assigned to PRs to exclude them from being marked as stale.

|

||||

exempt-pr-labels: "override-stale"

|

||||

# Limit the No. of API calls in one run default value is 30.

|

||||

operations-per-run: 500

|

||||

# Prevent to remove stale label when PRs or issues are updated.

|

||||

remove-stale-when-updated: true

|

||||

# List of labels to remove when issues/PRs unstale.

|

||||

labels-to-remove-when-unstale: 'stat:awaiting response'

|

||||

# comment on issue if not active for more then 7 days.

|

||||

stale-issue-message: 'This issue has been marked stale because it has no recent activity since 7 days. It will be closed if no further activity occurs. Thank you.'

|

||||

# comment on PR if not active for more then 14 days.

|

||||

stale-pr-message: 'This PR has been marked stale because it has no recent activity since 14 days. It will be closed if no further activity occurs. Thank you.'

|

||||

# comment on issue if stale for more then 7 days.

|

||||

close-issue-message: This issue was closed due to lack of activity after being marked stale for past 7 days.

|

||||

# comment on PR if stale for more then 14 days.

|

||||

close-pr-message: This PR was closed due to lack of activity after being marked stale for past 14 days.

|

||||

# Number of days of inactivity before an Issue Request becomes stale

|

||||

days-before-issue-stale: 7

|

||||

# Number of days of inactivity before a stale Issue is closed

|

||||

days-before-issue-close: 7

|

||||

# reason for closed the issue default value is not_planned

|

||||

close-issue-reason: completed

|

||||

# Number of days of inactivity before a stale PR is closed

|

||||

days-before-pr-close: 14

|

||||

# Number of days of inactivity before an PR Request becomes stale

|

||||

days-before-pr-stale: 14

|

||||

# Check for label to stale or close the issue/PR

|

||||

any-of-labels: 'stat:awaiting response'

|

||||

# override stale to stalled for PR

|

||||

stale-pr-label: 'stale'

|

||||

# override stale to stalled for Issue

|

||||

stale-issue-label: "stale"

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

|

|

@ -2,6 +2,5 @@ bazel-*

|

|||

mediapipe/MediaPipe.xcodeproj

|

||||

mediapipe/MediaPipe.tulsiproj/*.tulsiconf-user

|

||||

mediapipe/provisioning_profile.mobileprovision

|

||||

node_modules/

|

||||

.configure.bazelrc

|

||||

.user.bazelrc

|

||||

|

|

|

|||

|

|

@ -1,4 +1,4 @@

|

|||

# Copyright 2022 The MediaPipe Authors.

|

||||

# Copyright 2019 The MediaPipe Authors.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

|

|

@ -14,9 +14,4 @@

|

|||

|

||||

licenses(["notice"])

|

||||

|

||||

exports_files([

|

||||

"LICENSE",

|

||||

"tsconfig.json",

|

||||

"package.json",

|

||||

"yarn.lock",

|

||||

])

|

||||

exports_files(["LICENSE"])

|

||||

|

|

|

|||

|

|

@ -30,8 +30,6 @@ RUN apt-get update && apt-get install -y --no-install-recommends \

|

|||

git \

|

||||

wget \

|

||||

unzip \

|

||||

nodejs \

|

||||

npm \

|

||||

python3-dev \

|

||||

python3-opencv \

|

||||

python3-pip \

|

||||

|

|

@ -55,13 +53,13 @@ RUN pip3 install wheel

|

|||

RUN pip3 install future

|

||||

RUN pip3 install absl-py numpy opencv-contrib-python protobuf==3.20.1

|

||||

RUN pip3 install six==1.14.0

|

||||

RUN pip3 install tensorflow

|

||||

RUN pip3 install tensorflow==2.2.0

|

||||

RUN pip3 install tf_slim

|

||||

|

||||

RUN ln -s /usr/bin/python3 /usr/bin/python

|

||||

|

||||

# Install bazel

|

||||

ARG BAZEL_VERSION=6.1.1

|

||||

ARG BAZEL_VERSION=5.2.0

|

||||

RUN mkdir /bazel && \

|

||||

wget --no-check-certificate -O /bazel/installer.sh "https://github.com/bazelbuild/bazel/releases/download/${BAZEL_VERSION}/b\

|

||||

azel-${BAZEL_VERSION}-installer-linux-x86_64.sh" && \

|

||||

|

|

|

|||

17

LICENSE

17

LICENSE

|

|

@ -199,20 +199,3 @@

|

|||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

See the License for the specific language governing permissions and

|

||||

limitations under the License.

|

||||

|

||||

===========================================================================

|

||||

For files under tasks/cc/text/language_detector/custom_ops/utils/utf/

|

||||

===========================================================================

|

||||

/*

|

||||

* The authors of this software are Rob Pike and Ken Thompson.

|

||||

* Copyright (c) 2002 by Lucent Technologies.

|

||||

* Permission to use, copy, modify, and distribute this software for any

|

||||

* purpose without fee is hereby granted, provided that this entire notice

|

||||

* is included in all copies of any software which is or includes a copy

|

||||

* or modification of this software and in all copies of the supporting

|

||||

* documentation for such software.

|

||||

* THIS SOFTWARE IS BEING PROVIDED "AS IS", WITHOUT ANY EXPRESS OR IMPLIED

|

||||

* WARRANTY. IN PARTICULAR, NEITHER THE AUTHORS NOR LUCENT TECHNOLOGIES MAKE ANY

|

||||

* REPRESENTATION OR WARRANTY OF ANY KIND CONCERNING THE MERCHANTABILITY

|

||||

* OF THIS SOFTWARE OR ITS FITNESS FOR ANY PARTICULAR PURPOSE.

|

||||

*/

|

||||

|

|

|

|||

200

README.md

200

README.md

|

|

@ -1,121 +1,83 @@

|

|||

---

|

||||

layout: forward

|

||||

target: https://developers.google.com/mediapipe

|

||||

layout: default

|

||||

title: Home

|

||||

nav_order: 1

|

||||

---

|

||||

|

||||

----

|

||||

|

||||

|

||||

**Attention:** *We have moved to

|

||||

[https://developers.google.com/mediapipe](https://developers.google.com/mediapipe)

|

||||

as the primary developer documentation site for MediaPipe as of April 3, 2023.*

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

|

||||

## Live ML anywhere

|

||||

|

||||

**Attention**: MediaPipe Solutions Preview is an early release. [Learn

|

||||

more](https://developers.google.com/mediapipe/solutions/about#notice).

|

||||

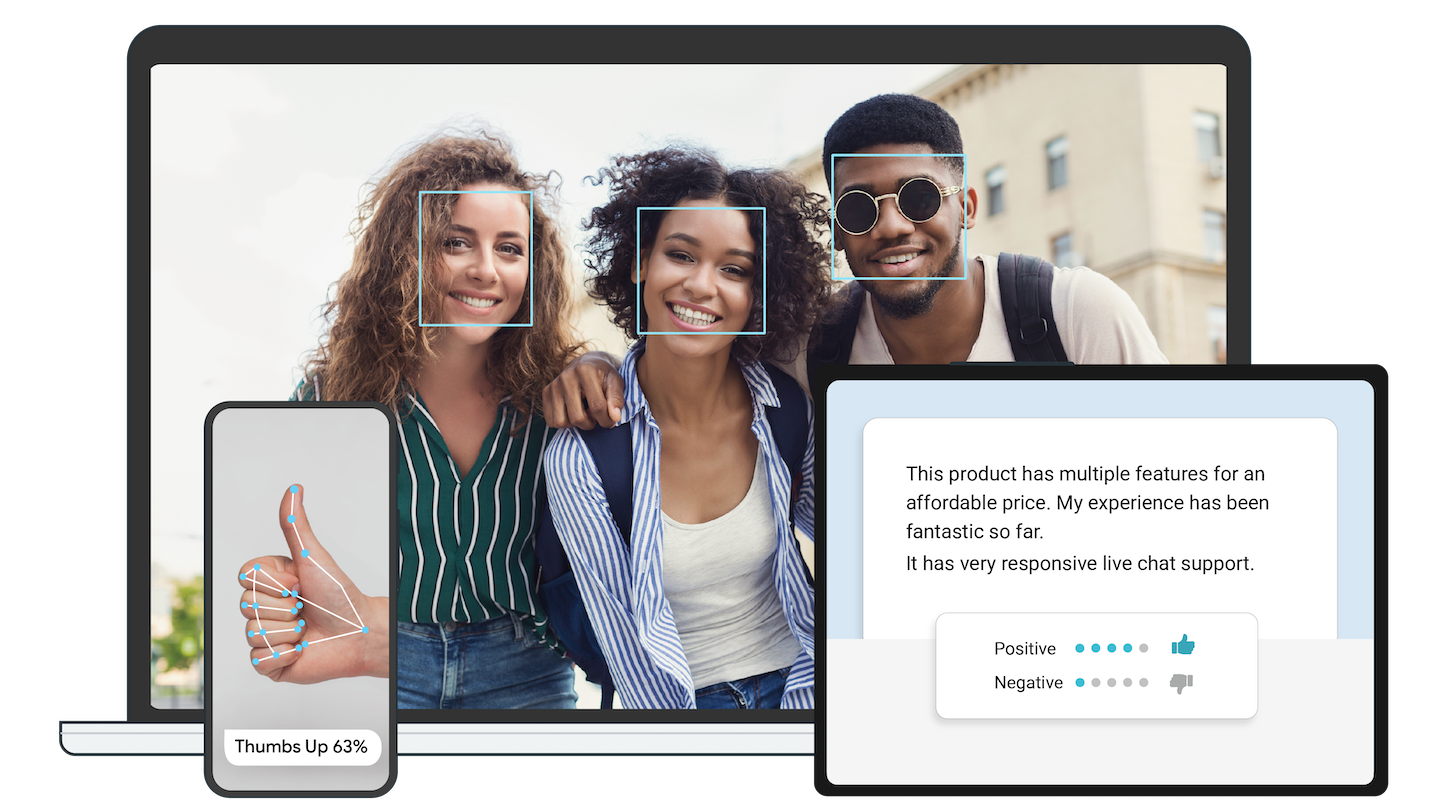

[MediaPipe](https://google.github.io/mediapipe/) offers cross-platform, customizable

|

||||

ML solutions for live and streaming media.

|

||||

|

||||

**On-device machine learning for everyone**

|

||||

|

|

||||

:------------------------------------------------------------------------------------------------------------: | :----------------------------------------------------:

|

||||

***End-to-End acceleration***: *Built-in fast ML inference and processing accelerated even on common hardware* | ***Build once, deploy anywhere***: *Unified solution works across Android, iOS, desktop/cloud, web and IoT*

|

||||

|

|

||||

***Ready-to-use solutions***: *Cutting-edge ML solutions demonstrating full power of the framework* | ***Free and open source***: *Framework and solutions both under Apache 2.0, fully extensible and customizable*

|

||||

|

||||

Delight your customers with innovative machine learning features. MediaPipe

|

||||

contains everything that you need to customize and deploy to mobile (Android,

|

||||

iOS), web, desktop, edge devices, and IoT, effortlessly.

|

||||

## ML solutions in MediaPipe

|

||||

|

||||

* [See demos](https://goo.gle/mediapipe-studio)

|

||||

* [Learn more](https://developers.google.com/mediapipe/solutions)

|

||||

Face Detection | Face Mesh | Iris | Hands | Pose | Holistic

|

||||

:----------------------------------------------------------------------------------------------------------------------------: | :-------------------------------------------------------------------------------------------------------------: | :-------------------------------------------------------------------------------------------------------: | :--------------------------------------------------------------------------------------------------------: | :-------------------------------------------------------------------------------------------------------: | :------:

|

||||

[](https://google.github.io/mediapipe/solutions/face_detection) | [](https://google.github.io/mediapipe/solutions/face_mesh) | [](https://google.github.io/mediapipe/solutions/iris) | [](https://google.github.io/mediapipe/solutions/hands) | [](https://google.github.io/mediapipe/solutions/pose) | [](https://google.github.io/mediapipe/solutions/holistic)

|

||||

|

||||

## Get started

|

||||

Hair Segmentation | Object Detection | Box Tracking | Instant Motion Tracking | Objectron | KNIFT

|

||||

:-------------------------------------------------------------------------------------------------------------------------------------: | :----------------------------------------------------------------------------------------------------------------------------------: | :-------------------------------------------------------------------------------------------------------------------------: | :---------------------------------------------------------------------------------------------------------------------------------------------------: | :-------------------------------------------------------------------------------------------------------------------: | :---:

|

||||

[](https://google.github.io/mediapipe/solutions/hair_segmentation) | [](https://google.github.io/mediapipe/solutions/object_detection) | [](https://google.github.io/mediapipe/solutions/box_tracking) | [](https://google.github.io/mediapipe/solutions/instant_motion_tracking) | [](https://google.github.io/mediapipe/solutions/objectron) | [](https://google.github.io/mediapipe/solutions/knift)

|

||||

|

||||

You can get started with MediaPipe Solutions by by checking out any of the

|

||||

developer guides for

|

||||

[vision](https://developers.google.com/mediapipe/solutions/vision/object_detector),

|

||||

[text](https://developers.google.com/mediapipe/solutions/text/text_classifier),

|

||||

and

|

||||

[audio](https://developers.google.com/mediapipe/solutions/audio/audio_classifier)

|

||||

tasks. If you need help setting up a development environment for use with

|

||||

MediaPipe Tasks, check out the setup guides for

|

||||

[Android](https://developers.google.com/mediapipe/solutions/setup_android), [web

|

||||

apps](https://developers.google.com/mediapipe/solutions/setup_web), and

|

||||

[Python](https://developers.google.com/mediapipe/solutions/setup_python).

|

||||

<!-- []() in the first cell is needed to preserve table formatting in GitHub Pages. -->

|

||||

<!-- Whenever this table is updated, paste a copy to solutions/solutions.md. -->

|

||||

|

||||

## Solutions

|

||||

[]() | [Android](https://google.github.io/mediapipe/getting_started/android) | [iOS](https://google.github.io/mediapipe/getting_started/ios) | [C++](https://google.github.io/mediapipe/getting_started/cpp) | [Python](https://google.github.io/mediapipe/getting_started/python) | [JS](https://google.github.io/mediapipe/getting_started/javascript) | [Coral](https://github.com/google/mediapipe/tree/master/mediapipe/examples/coral/README.md)

|

||||

:---------------------------------------------------------------------------------------- | :-------------------------------------------------------------: | :-----------------------------------------------------: | :-----------------------------------------------------: | :-----------------------------------------------------------: | :-----------------------------------------------------------: | :--------------------------------------------------------------------:

|

||||

[Face Detection](https://google.github.io/mediapipe/solutions/face_detection) | ✅ | ✅ | ✅ | ✅ | ✅ | ✅

|

||||

[Face Mesh](https://google.github.io/mediapipe/solutions/face_mesh) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

[Iris](https://google.github.io/mediapipe/solutions/iris) | ✅ | ✅ | ✅ | | |

|

||||

[Hands](https://google.github.io/mediapipe/solutions/hands) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

[Pose](https://google.github.io/mediapipe/solutions/pose) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

[Holistic](https://google.github.io/mediapipe/solutions/holistic) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

[Selfie Segmentation](https://google.github.io/mediapipe/solutions/selfie_segmentation) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

[Hair Segmentation](https://google.github.io/mediapipe/solutions/hair_segmentation) | ✅ | | ✅ | | |

|

||||

[Object Detection](https://google.github.io/mediapipe/solutions/object_detection) | ✅ | ✅ | ✅ | | | ✅

|

||||

[Box Tracking](https://google.github.io/mediapipe/solutions/box_tracking) | ✅ | ✅ | ✅ | | |

|

||||

[Instant Motion Tracking](https://google.github.io/mediapipe/solutions/instant_motion_tracking) | ✅ | | | | |

|

||||

[Objectron](https://google.github.io/mediapipe/solutions/objectron) | ✅ | | ✅ | ✅ | ✅ |

|

||||

[KNIFT](https://google.github.io/mediapipe/solutions/knift) | ✅ | | | | |

|

||||

[AutoFlip](https://google.github.io/mediapipe/solutions/autoflip) | | | ✅ | | |

|

||||

[MediaSequence](https://google.github.io/mediapipe/solutions/media_sequence) | | | ✅ | | |

|

||||

[YouTube 8M](https://google.github.io/mediapipe/solutions/youtube_8m) | | | ✅ | | |

|

||||

|

||||

MediaPipe Solutions provides a suite of libraries and tools for you to quickly

|

||||

apply artificial intelligence (AI) and machine learning (ML) techniques in your

|

||||

applications. You can plug these solutions into your applications immediately,

|

||||

customize them to your needs, and use them across multiple development

|

||||

platforms. MediaPipe Solutions is part of the MediaPipe [open source

|

||||

project](https://github.com/google/mediapipe), so you can further customize the

|

||||

solutions code to meet your application needs.

|

||||

See also

|

||||

[MediaPipe Models and Model Cards](https://google.github.io/mediapipe/solutions/models)

|

||||

for ML models released in MediaPipe.

|

||||

|

||||

These libraries and resources provide the core functionality for each MediaPipe

|

||||

Solution:

|

||||

## Getting started

|

||||

|

||||

* **MediaPipe Tasks**: Cross-platform APIs and libraries for deploying

|

||||

solutions. [Learn

|

||||

more](https://developers.google.com/mediapipe/solutions/tasks).

|

||||

* **MediaPipe models**: Pre-trained, ready-to-run models for use with each

|

||||

solution.

|

||||

To start using MediaPipe

|

||||

[solutions](https://google.github.io/mediapipe/solutions/solutions) with only a few

|

||||

lines code, see example code and demos in

|

||||

[MediaPipe in Python](https://google.github.io/mediapipe/getting_started/python) and

|

||||

[MediaPipe in JavaScript](https://google.github.io/mediapipe/getting_started/javascript).

|

||||

|

||||

These tools let you customize and evaluate solutions:

|

||||

To use MediaPipe in C++, Android and iOS, which allow further customization of

|

||||

the [solutions](https://google.github.io/mediapipe/solutions/solutions) as well as

|

||||

building your own, learn how to

|

||||

[install](https://google.github.io/mediapipe/getting_started/install) MediaPipe and

|

||||

start building example applications in

|

||||

[C++](https://google.github.io/mediapipe/getting_started/cpp),

|

||||

[Android](https://google.github.io/mediapipe/getting_started/android) and

|

||||

[iOS](https://google.github.io/mediapipe/getting_started/ios).

|

||||

|

||||

* **MediaPipe Model Maker**: Customize models for solutions with your data.

|

||||

[Learn more](https://developers.google.com/mediapipe/solutions/model_maker).

|

||||

* **MediaPipe Studio**: Visualize, evaluate, and benchmark solutions in your

|

||||

browser. [Learn

|

||||

more](https://developers.google.com/mediapipe/solutions/studio).

|

||||

The source code is hosted in the

|

||||

[MediaPipe Github repository](https://github.com/google/mediapipe), and you can

|

||||

run code search using

|

||||

[Google Open Source Code Search](https://cs.opensource.google/mediapipe/mediapipe).

|

||||

|

||||

### Legacy solutions

|

||||

|

||||

We have ended support for [these MediaPipe Legacy Solutions](https://developers.google.com/mediapipe/solutions/guide#legacy)

|

||||

as of March 1, 2023. All other MediaPipe Legacy Solutions will be upgraded to

|

||||

a new MediaPipe Solution. See the [Solutions guide](https://developers.google.com/mediapipe/solutions/guide#legacy)

|

||||

for details. The [code repository](https://github.com/google/mediapipe/tree/master/mediapipe)

|

||||

and prebuilt binaries for all MediaPipe Legacy Solutions will continue to be

|

||||

provided on an as-is basis.

|

||||

|

||||

For more on the legacy solutions, see the [documentation](https://github.com/google/mediapipe/tree/master/docs/solutions).

|

||||

|

||||

## Framework

|

||||

|

||||

To start using MediaPipe Framework, [install MediaPipe

|

||||

Framework](https://developers.google.com/mediapipe/framework/getting_started/install)

|

||||

and start building example applications in C++, Android, and iOS.

|

||||

|

||||

[MediaPipe Framework](https://developers.google.com/mediapipe/framework) is the

|

||||

low-level component used to build efficient on-device machine learning

|

||||

pipelines, similar to the premade MediaPipe Solutions.

|

||||

|

||||

Before using MediaPipe Framework, familiarize yourself with the following key

|

||||

[Framework

|

||||

concepts](https://developers.google.com/mediapipe/framework/framework_concepts/overview.md):

|

||||

|

||||

* [Packets](https://developers.google.com/mediapipe/framework/framework_concepts/packets.md)

|

||||

* [Graphs](https://developers.google.com/mediapipe/framework/framework_concepts/graphs.md)

|

||||

* [Calculators](https://developers.google.com/mediapipe/framework/framework_concepts/calculators.md)

|

||||

|

||||

## Community

|

||||

|

||||

* [Slack community](https://mediapipe.page.link/joinslack) for MediaPipe

|

||||

users.

|

||||

* [Discuss](https://groups.google.com/forum/#!forum/mediapipe) - General

|

||||

community discussion around MediaPipe.

|

||||

* [Awesome MediaPipe](https://mediapipe.page.link/awesome-mediapipe) - A

|

||||

curated list of awesome MediaPipe related frameworks, libraries and

|

||||

software.

|

||||

|

||||

## Contributing

|

||||

|

||||

We welcome contributions. Please follow these

|

||||

[guidelines](https://github.com/google/mediapipe/blob/master/CONTRIBUTING.md).

|

||||

|

||||

We use GitHub issues for tracking requests and bugs. Please post questions to

|

||||

the MediaPipe Stack Overflow with a `mediapipe` tag.

|

||||

|

||||

## Resources

|

||||

|

||||

### Publications

|

||||

## Publications

|

||||

|

||||

* [Bringing artworks to life with AR](https://developers.googleblog.com/2021/07/bringing-artworks-to-life-with-ar.html)

|

||||

in Google Developers Blog

|

||||

|

|

@ -124,8 +86,7 @@ the MediaPipe Stack Overflow with a `mediapipe` tag.

|

|||

* [SignAll SDK: Sign language interface using MediaPipe is now available for

|

||||

developers](https://developers.googleblog.com/2021/04/signall-sdk-sign-language-interface-using-mediapipe-now-available.html)

|

||||

in Google Developers Blog

|

||||

* [MediaPipe Holistic - Simultaneous Face, Hand and Pose Prediction, on

|

||||

Device](https://ai.googleblog.com/2020/12/mediapipe-holistic-simultaneous-face.html)

|

||||

* [MediaPipe Holistic - Simultaneous Face, Hand and Pose Prediction, on Device](https://ai.googleblog.com/2020/12/mediapipe-holistic-simultaneous-face.html)

|

||||

in Google AI Blog

|

||||

* [Background Features in Google Meet, Powered by Web ML](https://ai.googleblog.com/2020/10/background-features-in-google-meet.html)

|

||||

in Google AI Blog

|

||||

|

|

@ -153,6 +114,43 @@ the MediaPipe Stack Overflow with a `mediapipe` tag.

|

|||

in Google AI Blog

|

||||

* [MediaPipe: A Framework for Building Perception Pipelines](https://arxiv.org/abs/1906.08172)

|

||||

|

||||

### Videos

|

||||

## Videos

|

||||

|

||||

* [YouTube Channel](https://www.youtube.com/c/MediaPipe)

|

||||

|

||||

## Events

|

||||

|

||||

* [MediaPipe Seattle Meetup, Google Building Waterside, 13 Feb 2020](https://mediapipe.page.link/seattle2020)

|

||||

* [AI Nextcon 2020, 12-16 Feb 2020, Seattle](http://aisea20.xnextcon.com/)

|

||||

* [MediaPipe Madrid Meetup, 16 Dec 2019](https://www.meetup.com/Madrid-AI-Developers-Group/events/266329088/)

|

||||

* [MediaPipe London Meetup, Google 123 Building, 12 Dec 2019](https://www.meetup.com/London-AI-Tech-Talk/events/266329038)

|

||||

* [ML Conference, Berlin, 11 Dec 2019](https://mlconference.ai/machine-learning-advanced-development/mediapipe-building-real-time-cross-platform-mobile-web-edge-desktop-video-audio-ml-pipelines/)

|

||||

* [MediaPipe Berlin Meetup, Google Berlin, 11 Dec 2019](https://www.meetup.com/Berlin-AI-Tech-Talk/events/266328794/)

|

||||

* [The 3rd Workshop on YouTube-8M Large Scale Video Understanding Workshop,

|

||||

Seoul, Korea ICCV

|

||||